ringo888

Member level 1

Hi Guys,

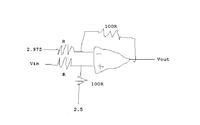

I have a differential amplifier circuit with a voltage input range of 2.95-3.00V and I wanted to expand the range by using the differential amp with 2.5V on the +ve input of the amp and using a gain of 10 to give me a range of 0.9V to 5V at 0.1V steps.

The issue I have is that when i simulate the circuit the output voltage is going down and after 2.7V it goes to 0V (btw the amp is common mode, no negative). So my question is why is this happening and how to i resolve this. I was using the equation (V1-V2)*(Rf/Rg).

Any help would be appreciated, i've attached a jpg of my circuit.

Thanks Ringo

I have a differential amplifier circuit with a voltage input range of 2.95-3.00V and I wanted to expand the range by using the differential amp with 2.5V on the +ve input of the amp and using a gain of 10 to give me a range of 0.9V to 5V at 0.1V steps.

The issue I have is that when i simulate the circuit the output voltage is going down and after 2.7V it goes to 0V (btw the amp is common mode, no negative). So my question is why is this happening and how to i resolve this. I was using the equation (V1-V2)*(Rf/Rg).

Any help would be appreciated, i've attached a jpg of my circuit.

Thanks Ringo